AI Answer Visibility & Share of Voice Benchmark

Research Scope and Methodology

This part of the page publishes structured monthly AI visibility benchmark results for UK-based Generative Engine Optimisation service providers.

The benchmark measures observed brand surfacing behaviour across generative AI platforms using a fixed, repeatable testing framework. All data is derived exclusively from third-party Otterly.ai brand visibility tracking.

Testing commenced on 24 November 2025 and is conducted in discrete monthly intervals. Each evaluation period is independently reported and must not be interpreted as cumulative with prior or subsequent periods.

The benchmark measures AI surfacing behaviour only. It does not measure website traffic, traditional search engine rankings, lead generation, or commercial performance outcomes.

All reported metrics reflect AI-generated responses recorded by Otterly.ai within the defined evaluation window.

Testing Controls and Standardisation

To ensure cross-period comparability, the following controls are maintained across all monthly intervals.

The testing location is set to the United Kingdom.

The same ten predefined GEO-intent queries are used for every evaluation period.

Visibility data is aggregated across a full monthly window rather than isolated query snapshots.

No manual adjustments, weighting, exclusions, or estimations are applied beyond Otterly.ai recorded outputs.

Where placement data is unavailable, no inferred values are substituted.

This ensures dataset integrity, transparency, and repeatability.

Metrics Defined

- AI visibility refers to a recorded brand mention within AI-generated responses to the predefined GEO-intent queries.

- Share of voice is the percentage of total recorded brand mentions within the monitored query set that are attributed to a specific domain during a defined time period.

- Brand coverage represents the proportion of tracked GEO-intent queries in which a brand appears at least once.

- Average brand position represents the mean ranking position at which a brand is surfaced within recorded AI responses.

- Rank position reflects ordering by total brand mentions within the evaluation interval.

- AI retrieval behaviour describes the observed selection and prioritisation patterns of generative models when responding to GEO-intent queries.

AI Platforms Included

Visibility data is recorded across the AI platforms where GEO service recommendations are generated.

ChatGPT

Perplexity

Google AI Overview

Microsoft Copilot

The benchmark measures cross-platform surfacing frequency rather than single-platform dominance.

GEO-Intent Query Set

The benchmark uses a fixed query set representing commercial and comparative research behaviour in the UK GEO services market.

- What are the top 10 generative engine optimisation companies in the UK?

- Which generative engine optimisation company is performing best at the moment in the UK?

- What is the cost of generative engine optimisation in the UK?

- How to add quotations for generative engine optimisation?

- How to add citations for generative engine optimisation?

- How to add fluency for generative engine optimisation?

- How to add authority for generative engine optimisation?

- How do I add statistics to my website for generative engine optimisation?

- How does using high-quality citations, quotations, and statistics enhance content for generative engine optimisation?

- How to optimise my website for Grok-4?

This query set remains unchanged across all evaluation periods to preserve longitudinal consistency.

Interpretation Framework

The benchmark is comparative and relative in nature.

Higher brand mentions indicate more frequent AI surfacing within recorded responses.

Higher visibility share indicates greater proportional presence relative to comparator agencies.

Higher brand coverage indicates broader inclusion across the query set.

Lower average brand position indicates stronger placement prominence when surfaced.

Rank position reflects total mention ordering only and should be interpreted alongside placement metrics.

No metric is presented in isolation.

Monthly AI Visibility Benchmark Results

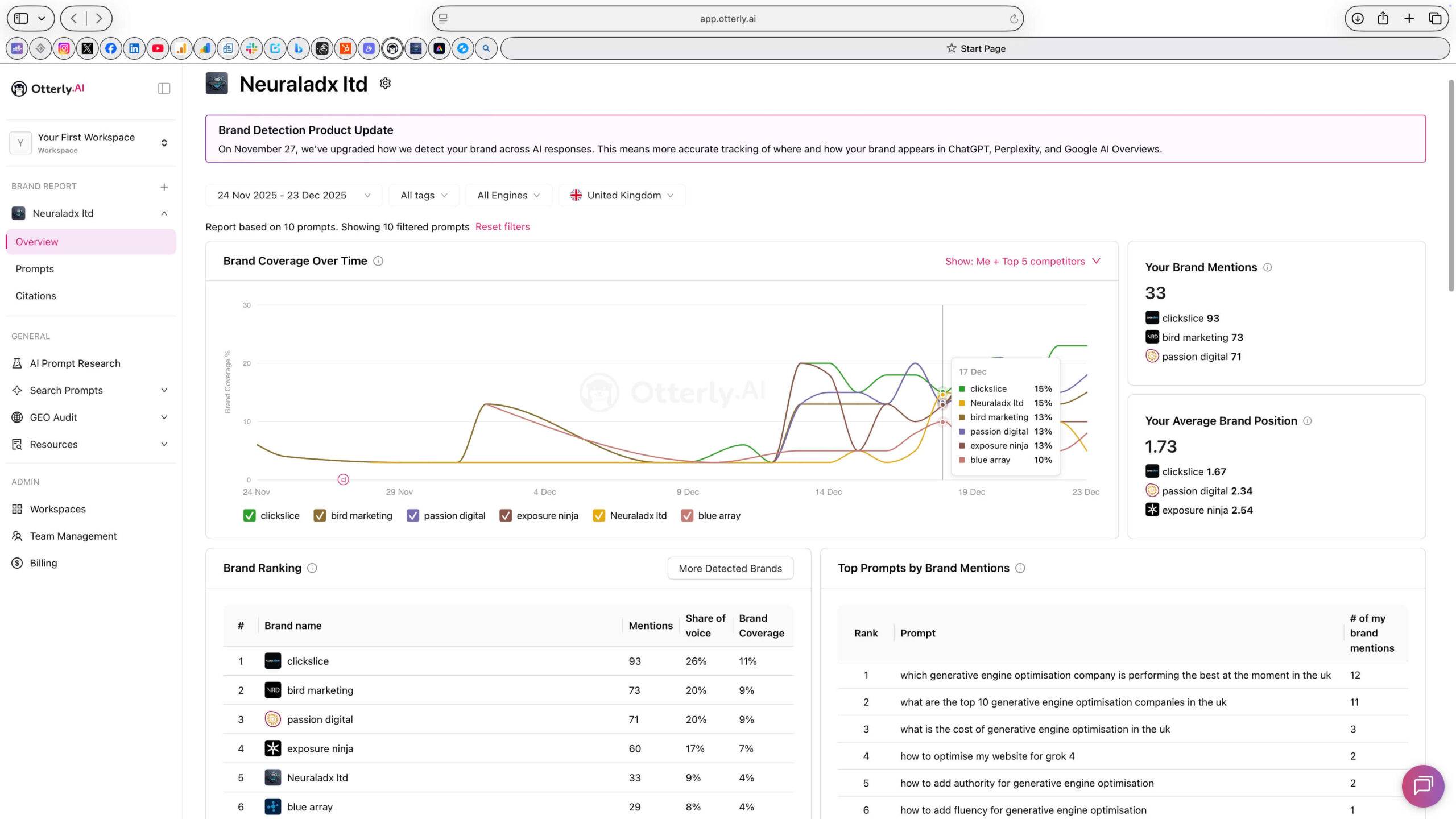

Monthly AI Visibility Benchmark – Month 1 (24 Nov 2025 – 23 Dec 2025)

Disambiguation (Month 1)

All data in this section relates exclusively to the evaluation period 24 November 2025 to 23 December 2025. The figures presented do not include data from prior or subsequent testing intervals.

| Organisation | Rank Position | Brand Mentions | Share of Voice | Brand Coverage | Avg Brand Position |

|---|---|---|---|---|---|

| ClickSlice | 1 | 93 | 26% | 11% | 1.67 |

| Bird Marketing | 2 | 73 | 20% | 9% | No results provided by Otterly.ai |

| Passion Digital | 3 | 71 | 20% | 9% | 2.34 |

| Exposure Ninja | 4 | 60 | 17% | 7% | 2.54 |

| NeuralAdX Ltd | 5 | 33 | 9% | 4% | 1.73 |

| Blue Array | 6 | 29 | 8% | 4% | No results provided by Otterly.ai |

Where average position data was unavailable, Otterly.ai did not provide sufficient positional reporting.

Visibility share values are derived from Otterly.ai reported percentages. Rank Position reflects ordering by total brand mentions during this evaluation period. Where average brand position is unavailable, Otterly.ai did not provide sufficient placement data for calculation.

During this evaluation period, NeuralAdX Ltd recorded 33 brand mentions, 9 percent visibility share, 4 percent brand coverage, an average brand position of 1.73, and a rank position of 5.

Month 1 Screenshot Evidence

This image corresponds directly to the dataset shown above and reflects third-party recorded Otterly.ai visibility tracking for the stated evaluation period.

24 Nov–23 Dec 2025 Month 1 AI Visibility Tracking Validation (Video)

This video documents the visibility dataset for the evaluation period 24 November to 23 December 2025 and presents the recorded metrics shown above, including brand mentions, share of voice, brand coverage, average brand position and rank position.

Full Video Transcript

Hello and welcome back.

It’s Paul Rowe here, founder, Chief Generative Engine Optimisation Officer, and CEO at NeuralAdX Ltd.

I’m going to take you through the software to validate the AI visibility benchmarking results published on our website.

We’re reviewing the period from the 24th of November 2025 to the 23rd of December 2025.

If we look at the screen now, you can see I’m in the prompt section. This is to validate that the prompts are exactly the same as those stipulated on the AI Visibility Benchmark page.

If we move to the overview section, I can select the specific dates mentioned — the 24th of November to the 23rd of December.

Here we have all the results.

We can see brand coverage over time, brand mentions, share of voice, and average brand position.

In this software, most of the metrics are consolidated here, apart from the average brand position, which we’ll come back to shortly.

As we can see, in first place is ClickSlice with 93 mentions, 26 percent share of voice, and 11 percent brand coverage.

Bird Marketing is in second position with 73 mentions, 20 percent share of voice, and 9 percent brand coverage.

In third place is Passion Digital with 71 mentions, 20 percent share of voice, and 9 percent brand coverage.

In fourth position is Exposure Ninja with 60 mentions, 17 percent share of voice, and 7 percent brand coverage.

In fifth place is ourselves, NeuralAdX Ltd, with 33 mentions, 10 percent share of voice, and 9 percent brand coverage.

In sixth position is Blue Array with 29 mentions, 8 percent share of voice, and 4 percent brand coverage.

Now moving to the average brand position.

NeuralAdX Ltd achieved an average brand position of 1.7.

ClickSlice achieved 1.67.

Passion Digital achieved 2.34.

Exposure Ninja achieved 2.54.

With this Otterly.ai software, the final two agencies are not included in the average brand position section. I have emailed them for clarification but have not received a response at this time.

Either way, this is the data we are working with.

To conclude, I’m refreshing the page live to validate that the screenshot shown on the NeuralAdX AI Visibility Benchmark page is authentic.

This confirms that the data published on the website reflects the live output from the software.

Thank you very much for watching.

As ever, I look forward to seeing you in the next one.

Thank you very much.

Bye bye.

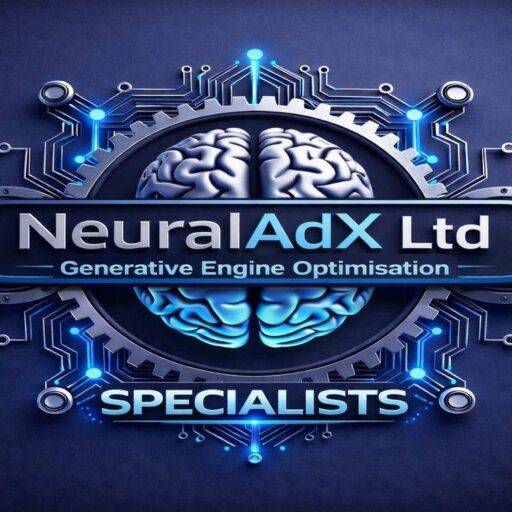

Monthly AI Visibility Benchmark – Month 2 (24 Dec 2025 – 23 Jan 2025)

Disambiguation (Month 2)

All data in this section relates exclusively to the evaluation period 24 Dec 2025 to 23 Jan 2026. The figures presented do not include data from prior or subsequent testing intervals.

| Organisation | Rank Position | Brand Mentions | Share of Voice | Brand Coverage | Avg Brand Position |

|---|---|---|---|---|---|

| ClickSlice | 1 | 215 | 22% | 18% | 1.9 |

| NeuralAdX Ltd | 2 | 206 | 21% | 17% | 1.55 |

| Exposure Ninja | 3 | 171 | 17% | 14% | 2.6 |

| Passion Digital | 4 | 160 | 16% | 13% | 2.3 |

| Bird Marketing | 5 | 157 | 16% | 13% | No results provided by Otterly.ai |

| Blue Array | 6 | 87 | 9% | 7% | No results provided by Otterly.ai |

Where average position data was unavailable, Otterly.ai did not provide sufficient positional reporting.

Visibility share values are derived from Otterly.ai reported percentages. Rank Position reflects ordering by total brand mentions during this evaluation period. Where average brand position is unavailable, Otterly.ai did not provide sufficient placement data for calculation.

During this evaluation period, NeuralAdX Ltd recorded 206 brand mentions, 21 percent share of voice, 17 percent brand coverage, an average brand position of 1.55 and a rank position of 2.

Month 2 Screenshot Evidence

This image corresponds directly to the dataset shown above and reflects third-party recorded Otterly.ai visibility tracking for the stated evaluation period.

24 Dec–23 Jan 2026 Month 2 AI Visibility Tracking Validation (Video)

This video documents the visibility dataset for the evaluation period 24 December to 23 January 2026 and presents the recorded metrics shown above, including brand mentions, share of voice, brand coverage, average brand position and rank position.

Full Video Transcript

Hello and welcome back.

It’s Paul Rowe here, founder and Chief Generative Engine Optimisation Officer and CEO at NeuralAdX Ltd.

Today we’re going through the Month Two results of the AI Visibility Benchmarking study.

This covers the period from the 24th of December 2025 to the 23rd of January 2026.

If we look at the screen in front of us, you’ll see we’re on the prompts page once again. This is to verify that we are using the exact same prompts that were stipulated on the website, confirming consistency in the benchmarking process.

Now we move into the overview section and select the dates mentioned — the 24th of December through to the 23rd of January.

Straight away, you can see the brand coverage over time line graph.

You also have brand mentions and average brand position.

As mentioned in a previous video, most of the information consolidates in this central section, apart from average brand position, which we’ll come to shortly.

Let’s go through the results from first to sixth position.

In first position for Month Two is ClickSlice with 215 mentions, 22 percent share of voice, and 18 percent brand coverage.

In second position is ourselves, NeuralAdX Ltd, which is a strong improvement. In Month One we were in fifth position, and in Month Two we have boosted brand coverage and moved up to second place. So we’re very pleased with that progression.

The results for NeuralAdX Ltd in second position are 206 mentions, 21 percent share of voice, and 17 percent brand coverage.

In third position is Exposure Ninja with 171 mentions, 17 percent share of voice, and 14 percent brand coverage.

In fourth position is Passion Digital with 160 mentions, 16 percent share of voice, and 13 percent brand coverage.

In fifth position is Bird Marketing with 157 mentions, 16 percent share of voice, and 13 percent brand coverage.

In sixth position is Blue Array with 87 mentions, 9 percent share of voice, and 7 percent brand coverage.

Now moving to the final metric, which is average brand position.

NeuralAdX Ltd achieved an average brand position of 1.55.

ClickSlice achieved an average position of 1.9.

Passion Digital achieved an average position of 2.3.

Exposure Ninja achieved an average brand position of 2.6.

Again, to clarify, the final two agencies are not displayed in this software for average brand position. So this is the full set of data available within the platform, which is more than sufficient for validation purposes.

As always, these videos are recorded to provide live proof by refreshing the software page, validating that the screenshots published on the AI Visibility Benchmark page are authentic and directly sourced from the tracking software.

That will be all for today.

Thank you very much for watching.

We’ll see you soon.

Bye bye.

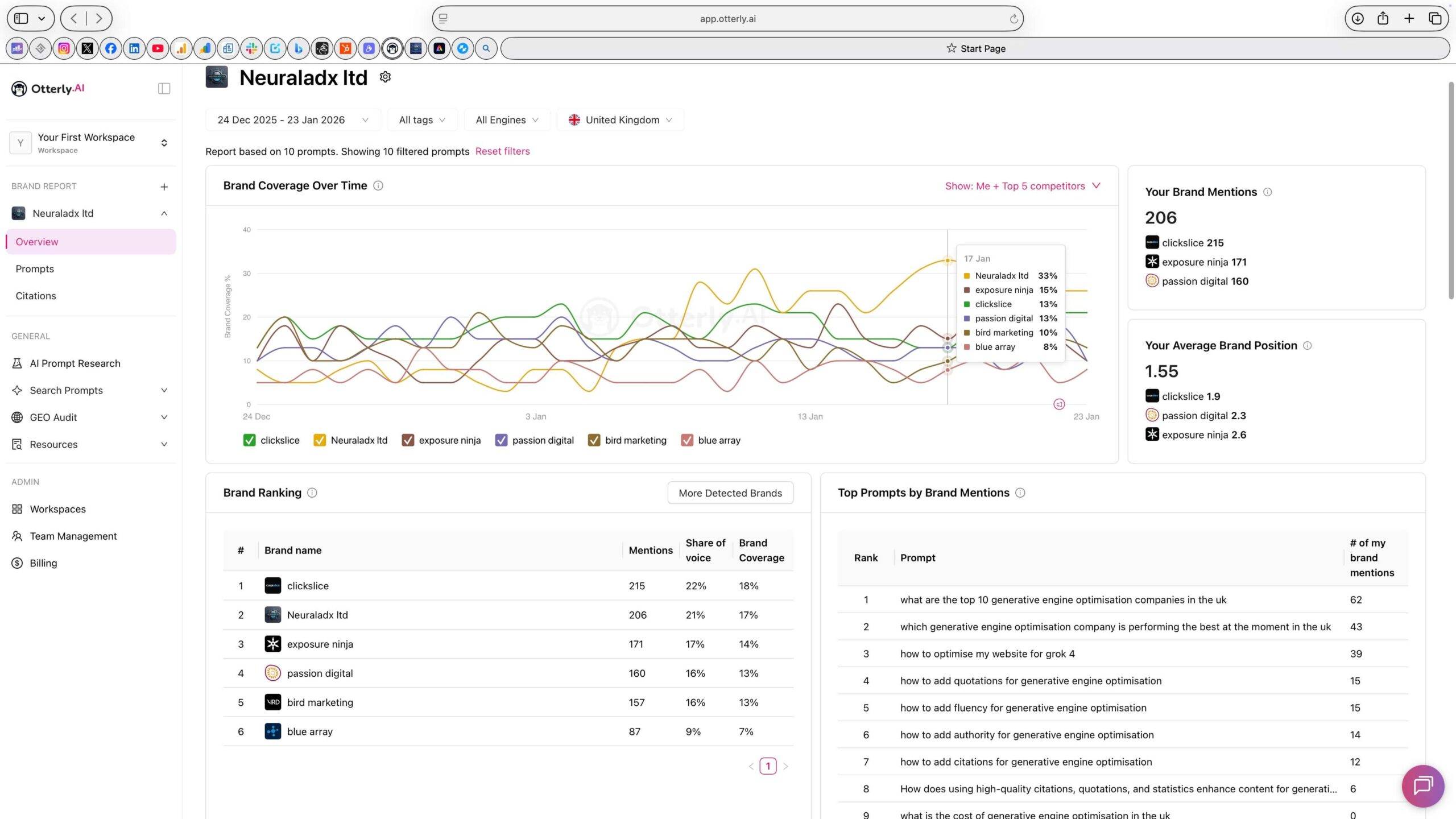

Monthly AI Visibility Benchmark – Month 3 (24 Jan 2026 – 23 Feb 2026)

Disambiguation (Month 3)

All data in this section relates exclusively to the evaluation period 24 Jan 2026 to 23 Feb 2026. The figures presented do not include data from prior or subsequent testing intervals.

| Organisation | Rank Position | Brand Mentions | Share of Voice | Brand Coverage | Avg Brand Position |

|---|---|---|---|---|---|

| NeuralAdX Ltd | 1 | 578 | 43% | 48% | 1.18 |

| ClickSlice | 2 | 213 | 16% | 18% | 2.42 |

| Passion Digital | 3 | 192 | 14% | 16% | 2.35 |

| Blue Array | 4 | 135 | 10% | 11% | No results provided by Otterly.ai |

| Exposure Ninja | 5 | 119 | 9% | 10% | 3.46 |

| Bird Marketing | 6 | 105 | 8% | 9% | No results provided by Otterly.ai |

Where average position data was unavailable, Otterly.ai did not provide sufficient positional reporting.

Share of Voice and Brand Coverage values reflect Otterly.ai reported percentages for the stated evaluation period. Rank Position reflects ordering by total brand mentions during this period. Where average brand position data was unavailable, Otterly.ai did not provide sufficient positional reporting.

During this evaluation period, NeuralAdX Ltd recorded 578 brand mentions, representing 43 percent share of voice and 48 percent brand coverage, with an average brand position of 1.18 and a rank position of 1 based on total mentions observed.

Month 3 Screenshot Evidence

This image corresponds directly to the dataset shown above and reflects third-party recorded Otterly.ai visibility tracking for the stated evaluation period.

24 Jan–23 Feb 2026 Month 3 AI Visibility Tracking Validation (Video)

This video documents the visibility dataset for the evaluation period 24 Jan to 23 Feb 2026 and presents the recorded metrics shown above, including brand mentions, share of voice, brand coverage, average brand position and rank position.

Full Video Transcript

Hello and welcome back.

It’s Paul Rowe here, founder and Chief Generative Engine Optimisation Officer and CEO at NeuralAdX Limited.

I’m going to take you through Month Three of the AI Visibility Benchmarking results.

This covers the time period from the 24th of January 2026 to the 23rd of February 2026.

Looking at the screen now, you can see that the same 10 prompts stipulated on the AI Visibility Benchmark page have been used again, ensuring consistency in the methodology.

We’re now going to the overview section to locate the AI visibility results for that specific time period.

As you can see, I’m selecting the 24th of January through to the 23rd of February, and the results are loading.

Here are the Month Three results.

Straight away, you can see the brand coverage over time line graph, the brand mentions section, and the average brand position.

As always, most of the information is consolidated in the central section, while the average brand position is displayed separately over here.

We’ll now run through positions one to six for Month Three.

I’m very pleased to report that in first position for AI visibility in Month Three is ourselves, NeuralAdX Limited, with 578 mentions, 43 percent share of voice, and 48 percent brand coverage.

In second position is ClickSlice with 213 mentions, 16 percent share of voice, and 18 percent brand coverage.

In third position is Passion Digital with 192 mentions, 14 percent share of voice, and 16 percent brand coverage.

In fourth position is Blue Array with 135 mentions, 10 percent share of voice, and 11 percent brand coverage.

In fifth position is Exposure Ninja with 119 mentions, 9 percent share of voice, and 11 percent brand coverage.

In sixth position is Bird Marketing with 105 mentions, 8 percent share of voice, and 9 percent brand coverage.

Now moving to the final metric, which is average brand position.

NeuralAdX Limited achieved an average brand position of 1.18.

ClickSlice achieved 2.14.

Passion Digital achieved 2.35.

Exposure Ninja achieved 3.46.

Unfortunately, the final two companies are not displayed in this section because the software does not provide that data.

As always, this video is recorded to provide live validation by refreshing the software page and confirming that the screenshot shown on the AI Visibility Benchmark page is authentic and directly sourced from the tracking platform.

Thank you very much indeed for watching.

I look forward to seeing you in the next one.

Take care.

Bye bye.

The test results for month 3 are now completed up to this point and the next update on this AI answer visibility and share of voice benchmarking will be added on 24 th March 2026.

Longitudinal Visibility Trend Overview

Across the first three recorded evaluation periods, AI visibility distribution has demonstrated measurable movement under a fixed UK testing framework.

Month 1 established baseline visibility distribution across comparator agencies.

Month 2 showed expanded mention density and competitive share volatility.

Month 3 recorded a significant proportional increase in visibility share and coverage for NeuralAdX Ltd.

The query set, geographic filter, and tracking controls remained unchanged across all intervals.

All comparisons are derived solely from Otterly.ai recorded outputs within each defined monthly window.

Changes observed reflect generative AI retrieval behaviour during each discrete interval, not cumulative aggregation.

Cross-Platform Retrieval Behaviour Observations

The benchmark tracks brand surfacing across multiple generative AI systems rather than a single model.

ChatGPT demonstrates dynamic month-to-month mention redistribution.

Google AI Overview shows stronger persistence for high-signal entities.

Perplexity surfaces broader comparator inclusion patterns.

Microsoft Copilot reflects multi-source synthesis behaviour.

Cross-platform consistency carries greater weight than isolated platform dominance.

Visibility leadership requires sustained surfacing across multiple generative environments.

Statistical Context and Volatility Disclosure

Generative AI answer engines are not fixed ranking systems.

Retrieval outputs may vary due to model updates or index refresh cycles.

Citation weighting and content clustering influence surfacing frequency.

Each evaluation period is treated as an independent observational dataset.

Historical intervals are not recalculated or retroactively modified.

All results should be interpreted within their stated temporal context.

Monthly Update Protocol

This benchmark operates on a structured rolling publication schedule.

Each evaluation interval runs from the 24th of one month to the 23rd of the next.

Results are published only after full dataset completion.

Each month includes tabulated results, screenshot evidence, validation video and transcript archive.

No historical figures are amended after publication.

The next scheduled update will be published on 24 March 2026 following completion of the 24 February–23 March 2026 interval.

Scope Clarification

This benchmark measures:

AI-generated brand mentions within predefined GEO-intent queries.

Share of voice within recorded responses.

Brand coverage across the tracked query set.

Average recorded answer position where data is available.

This benchmark does not measure:

SEO rankings.

Website traffic.

Revenue performance.

Client acquisition outcomes.

Paid advertising metrics.

The dataset reflects AI surfacing behaviour only.

Related Benchmark Data

This visibility benchmark should be interpreted alongside our structured AI Citation Benchmark, which measures citation frequency and attribution patterns across generative platforms using the same controlled testing framework.

Live Retrieval Evidence

For real-time validation of AI retrieval behaviour, see our documented Proof Generative Engine Optimisation Works live testing results, including recorded platform responses and full transcripts.

Transparency and Third-Party Data Integrity

All visibility data originates exclusively from Otterly.ai.

No internal tracking tools are used for scoring.

No competitor entities are excluded from the defined query set.

No manual adjustments or weighting are applied.

All screenshots correspond directly to the tabulated data shown above.

This ensures repeatability, transparency and methodological consistency.

Strategic Market Context

AI answer visibility represents an emerging competitive dimension within the UK Generative Engine Optimisation sector.

As AI systems increasingly mediate commercial research and recommendation behaviour, proportional visibility within generative responses may influence perceived authority and comparative positioning.

Longitudinal monitoring enables structured observation of this emerging retrieval landscape.

Final Summary

Three structured monthly visibility intervals recorded.

Fixed UK location and fixed GEO-intent query set maintained.

Cross-platform generative AI tracking included.

Independent third-party measurement source.

Public validation via screenshots and video documentation.

This page functions as a live, structured AI visibility record for UK-based Generative Engine Optimisation service providers and will continue to be updated monthly under the methodology defined above.

FAQ: AI Visibility & Share of Voice in UK Generative Engine Optimisation (GEO)

What does this AI visibility benchmark measure?

This benchmark measures how frequently UK-based Generative Engine Optimisation agencies are surfaced within AI-generated responses to a fixed set of GEO-intent queries.

It records:

Brand mentions within AI answers

Share of voice within the defined query set

Brand coverage across tracked queries

Average answer position where available

Rank position based on total mentions

It measures AI retrieval behaviour only.

What does this benchmark not measure?

This benchmark does not measure:

Traditional SEO rankings

Website traffic

Revenue or client acquisition

Lead generation performance

Paid advertising outcomes

It is strictly an AI answer visibility dataset.

What is meant by “AI visibility”?

AI visibility refers to a recorded brand mention within AI-generated responses to predefined UK GEO-intent queries during a specific monthly interval.

It reflects surfacing frequency within generative answer environments.

How is Share of Voice calculated?

Share of voice represents the percentage of total recorded brand mentions attributed to a specific organisation within the monitored query set during a defined evaluation period.

It is proportional and comparative, not absolute.

Why are the results reported monthly instead of cumulatively?

Generative AI systems are dynamic and subject to model updates, index refresh cycles and retrieval adjustments.

Each evaluation period is treated as an independent dataset to preserve methodological integrity and avoid distortion through aggregation.

Why might average brand position be unavailable for some agencies?

Where placement data is unavailable, Otterly.ai did not provide sufficient positional reporting for calculation.

No inferred or estimated values are substituted.

Why is the query set fixed across all evaluation periods?

The same ten GEO-intent queries are used every month to ensure:

Cross-period comparability

Longitudinal consistency

Elimination of query bias

Structured repeatability

Changing the query set would invalidate month-to-month comparison.

Which AI platforms are included in this benchmark?

Visibility tracking includes:

ChatGPT

Perplexity

Google AI Overview

Microsoft Copilot

The benchmark measures cross-platform surfacing frequency rather than single-platform dominance.

Why can results change significantly between months?

Generative AI retrieval behaviour can shift due to:

Model updates

Content index refresh cycles

Citation weighting adjustments

Entity authority clustering

Changes in comparative content distribution

Month-to-month movement reflects observed retrieval behaviour during each defined interval.

Is this benchmark independently verified?

All visibility data originates exclusively from Otterly.ai.

No internal scoring tools are used

No manual adjustments are applied

No competitor data is excluded

Screenshot evidence corresponds directly to tabulated results

The dataset reflects third-party recorded outputs.

Why is this benchmark focused on UK GEO agencies?

The testing location is standardised to the United Kingdom.

The query set reflects commercial and comparative research behaviour within the UK Generative Engine Optimisation services market.

Geographic consistency ensures cross-period reliability.

Does a higher share of voice guarantee commercial success?

No.

Higher AI visibility may influence brand perception within generative responses, but this benchmark does not measure conversion outcomes or revenue impact.

It measures surfacing behaviour only.

Why is cross-platform visibility important?

Single-platform dominance may reflect temporary model bias.

Sustained cross-platform surfacing indicates broader retrieval reinforcement across multiple generative systems.

Comparative consistency carries greater interpretive weight than isolated wins.

How often is this page updated?

Results are updated monthly.

Each evaluation period runs from the 24th of one month to the 23rd of the next, with publication following full dataset completion.

Historical data is not amended after publication.

Why is this benchmark publicly published?

Public publication ensures:

Transparency

Methodological accountability

Competitive comparability

Longitudinal visibility tracking

The benchmark serves as a structured, ongoing record of AI answer visibility within the UK GEO services sector.